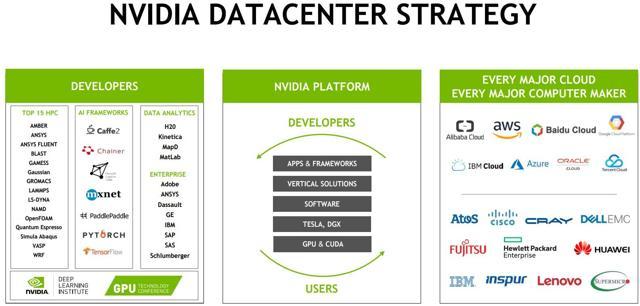

And all of this has to happen just-in-time, so that we minimize waste. That requires finding solutions to storage and inventory management and especially how to dispose of them in an optimal manner. Enjoy and feel free to salivate :)ġ) Ability to problem solve: Since we don’t visit the farm frequently, when we do go, we get a LOT of them all at once. But wait! What do mangoes have to do with skills and #CrackerjackCompetencies? Companies including Google and Amazon are also designing their own custom AI chips for inference.I spent 6 weeks in Bangalore in June-July and was treated to more delectable mangoes than I have ever consumed! The organically grown mangoes came from a farm that our family owns in the outskirts of Bangalore. The announcement comes as Nvidia's primary GPU rival, AMD, recently announced its own AI-oriented chip, the MI300X, which can support 192GB of memory and is being marketed for its capacity for AI inference. "Having larger memory allows the model to remain resident on a single GPU and not have to require multiple systems or multiple GPUs in order to run," Buck said. Nvidia also announced a system that combines two GH200 chips into a single computer for even larger models.

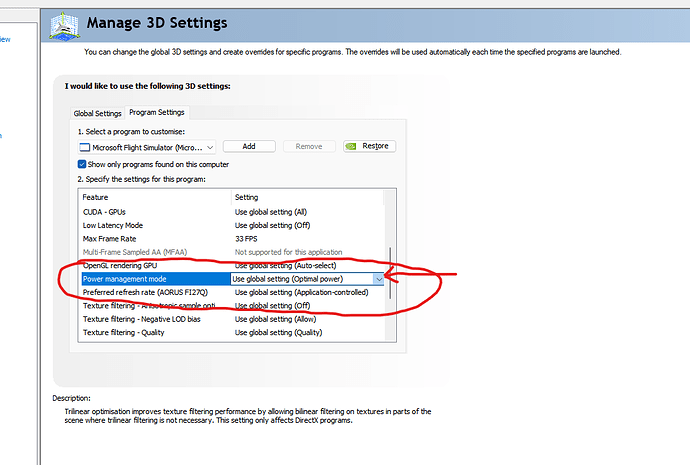

Nvidia's H100 has 80GB of memory, versus 141GB on the new GH200. Nvidia's new GH200 is designed for inference since it has more memory capacity, allowing larger AI models to fit on a single system, Nvidia VP Ian Buck said on a call with analysts and reporters on Tuesday. "The inference cost of large language models will drop significantly." "You can take pretty much any large language model you want and put it in this and it will inference like crazy," Huang said. But unlike training, inference takes place near-constantly, while training is only required when the model needs updating. Like training, inference is computationally expensive, and it requires a lot of processing power every time the software runs, like when it works to generate a text or image. Then the model is used in software to make predictions or generate content, using a process called inference. Oftentimes, the process of working with AI models is split into at least two parts: training and inference.įirst, a model is trained using large amounts of data, a process that can take months and sometimes requires thousands of GPUs, such as, in Nvidia's case, its H100 and A100 chips. Nvidia representatives declined to give a price. The new chip will be available from Nvidia's distributors in the second quarter of next year, Huang said, and should be available for sampling by the end of the year. He added, "This processor is designed for the scale-out of the world's data centers."

"We're giving this processor a boost," Nvidia CEO Jensen Huang said in a talk at a conference on Tuesday. But the GH200 pairs that GPU with 141 gigabytes of cutting-edge memory, as well as a 72-core ARM central processor. Nvidia's new chip, the GH200, has the same GPU as the company's current highest-end AI chip, the H100. Personal Loans for 670 Credit Score or Lower Personal Loans for 580 Credit Score or Lower Best Debt Consolidation Loans for Bad Credit

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed